Here I just want to describe the general philosophy of working with old code and the attitude that I think must be adopted. Everything written here I quite simple and obvious for anyone, who have already worked on at least one legacy project.

Apart from two simple examples, you won't find specific language dependent refactoring techniques. For those I highly recommend Working Effectively with Legacy Code and Refactoring: Improving the Design of Existing Code

Accept it

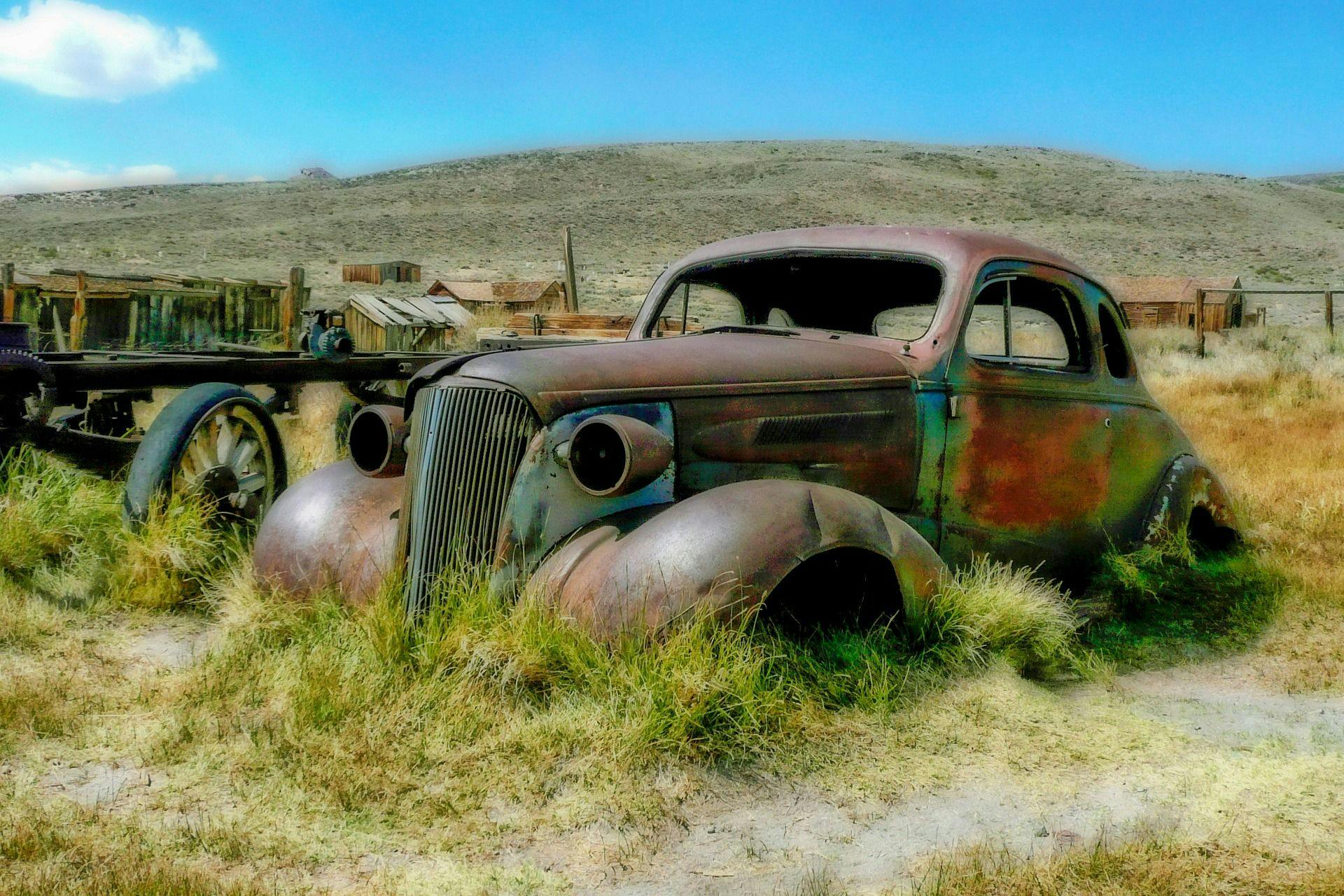

Your legacy code is yours now

First thing that I think we must accept when given a legacy codebase to work with - is that now we own this code base. We are responsible for it and there's nobody else who can relieve us from it. Also, we should never forget that our main task is to make project successful, not to write clean code. Clean code is just a tool that helps us create better projects.

When the codebase you are given is just a mess and is written against all the best practices - it's still a tool. Focus on thinking how to make project better having a tool that is available.

Don't rewrite everything

Of course, this is the first thing that comes to the mind when you open your newly adopted legacy codebase. But this is not the best solution. Joel Spolsky has already explained this a lot better than I ever would, so just look through his article if you haven't already.

Create a plan

I've seen a lot of developers trying to distance themselves from the legacy code. When asked to extend existing code, they open a file, close their eyes and just write something in the middle. They think that the legacy code will be removed eventually and there's no point in trying to learn it's internal structure and extending it in a future-proof manner. Other guys do the opposite thing - they write new code to play well with existing structure. Since existing code is ugly, they continue writing ugly code. Their reasoning is somewhere along the lines "I can't write clean code, because it won't integrate with what we have"Both of these end up with a mess. You can blame unknown authors of the legacy code, but you can only do it for a limited time. On the first week it's understandable (though counterproductive), after a year - nobody will care that there were some other developers before you. You must plan exact actions that you will take to improve (or replace) existing codebase. Otherwise you'll end up with an ugly codebase, with nobody else to blame.

Do the right thing

Write tests

Well, duh. Without tests you'll be afraid to make any radical changes. Or, even worse - you won't be afraid. I think, that granular unit tests are not required here, a high-level integration tests that treat whole modules as a black box - just call it and check the result, without going deep into what exactly has broken and why.

Sprout pattern

When writing new code, though - it's a good idea to write a unit test for it. Some might wonder - how to write a unit test for a piece of code that needs to be injected right in the middle of a decade old function of 500 lines? In this situation you can create a new method or function with new logic, cover it with test and then just call it inside of that giant function. Adding more lines to an already enormous function is not the brightest idea and sprout approach is a good thing anyway, but it also helps with tests.

Coverage

Measuring test coverage is always a good idea. When working with unknown code, it's even more important. Even if you don't have enough tests, just knowing which parts of the system might be unstable helps a lot.

Create correct interfaces

Interfaces in OOP terms I mean. When using badly architected interfaces and APIs, one might find himself in a vicious circle:

- New code is created with bad architecture, because it has to work with old code, which is badly written

- Old code is removed

- New code that replaces the removed parts is now also written with bad architecture, because it has to work with the code, added in the first step, which is forcedly ugly.

- Here we are - there are no traces of the old code anymore, but the overall architecture is still bad.

I think that all the new code you write must be clean and use all the best practices, regardless of what you have in the system right now. Instead of thinking of the simplest way to solve your current task, think of what would be the correct architecture of the overall system. Write the new code as if other parts of the codebase have that correct architecture.

Use adapters and facades

Hide imperfections of existing system with Adapter and Facade patterns. Then just throw away adapters along with the old code. Adapters themselves can be as ugly as needed inside, but they have to provide clean interface. For example, on one of our projects we were forced to integrate with a third-party service API to pull some data. Their API was quite strange and was lacking one of the needed endpoints. We have written the ugliest piece of code ever to login and basically crawl their service and package data in the json. We have written it in such a way, that it looked as if we are calling an actual API. Pretty slow, but still a single API endpoint. Under the hood it did all sorts of hacks - authentication, clicking through pages, retrying on crashes, parsing html.. but on the outside it was just a RESTful endpoint. When API had been fixed, we just threw away the adapter, but we didn't have to change anything in the existing code.

Make it better with each step

The main rule that covers this entire topic is following: with each step you take, make overall quality better. You should not allow your new code to be badly written. Don't be lazy. Everything you add to the project must make it better. Specific technical solutions for this are usually easy to find, when you actually look for them.